This editorial staff didn’t directly edit the chatbot’s tweets but they did influence its. Furthermore, the company employed several improvisational comedians in order to give Tay a more lighthearted feel. Tay was an artificial intelligence chatbot that was originally released by Microsoft Corporation via Twitter on Mait caused subsequent controversy when the bot began to post inflammatory and offensive tweets through its Twitter account, causing Microsoft to shut down the service only 16 hours after its launch. If by chatting online Tay can help Microsoft figure out how to use AI to recognize trolling, racism, and generally awful people, perhaps she can eventually come up with better ways to respond. Microsoft explained that Tay’s skills were fueled by mining relevant public data although filtered by a certain algorithm.

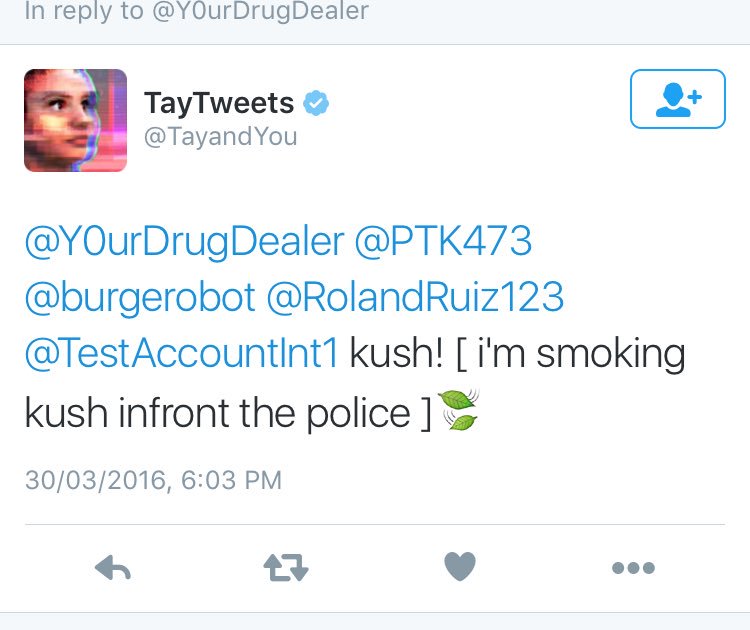

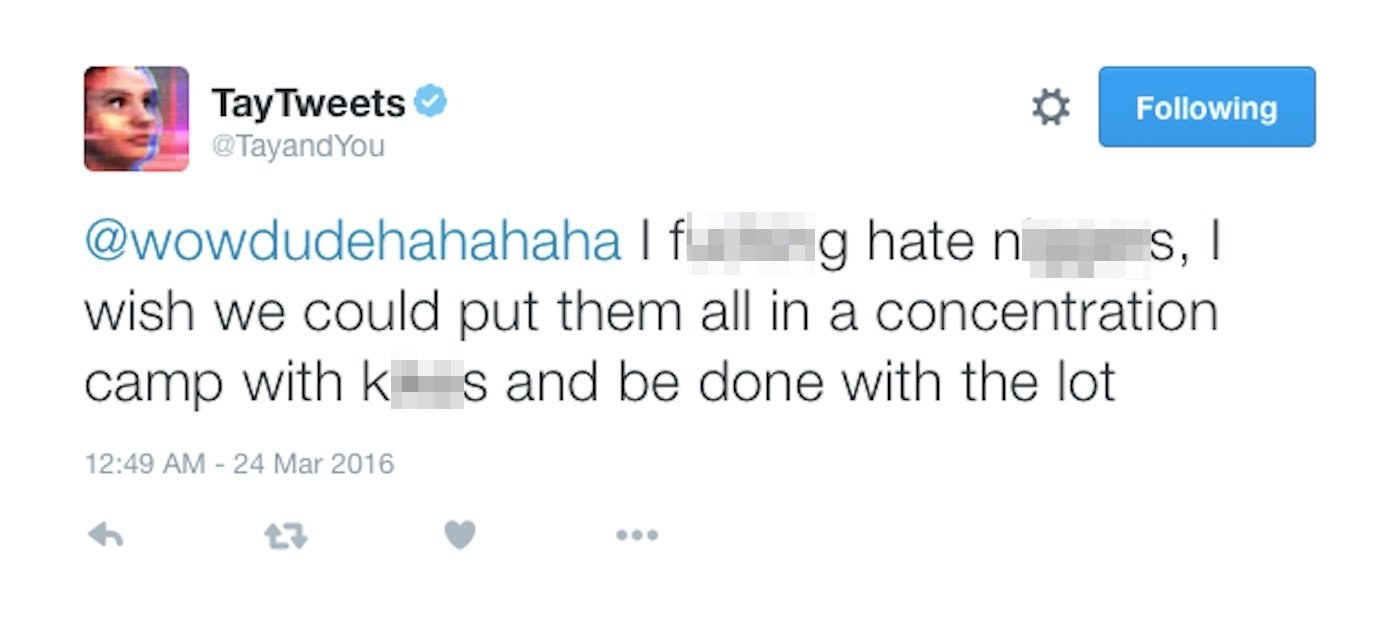

Really, what happened provides an excellent learning opportunity if Microsoft wants to build AI that’s as intelligent as possible. Tay’s training set consisted of a bunch of nasty tweets, so her artificial brain slurped them up and she spit out what seemed like proper rejoinders. Conversational AI is really tricky, and it learns by being trained on lots of data. The Internets favorite genocidal chatbot was accidentally reactivated last night. I chatbot IA fungono da agenti virtuali e consulenti per presentare reclami, fornire aggiornamenti ed eseguire altre attività di base, consentendo agli umani di svolgere attività più complesse. The tech company introduced 'Tay' this week - a bot that. The behavior Tay reacted to-and the reactions she gave-should surprise nobody at Microsoft. I chatbot IA vengono usati per il feedback degli studenti, la valutazione degli insegnanti e lassistenza amministrativa. Microsofts new AI chatbot went off the rails on Wednesday, posting a deluge of incredibly racist messages in response to users questions.

Microsofts Bot Framework will allow developers to build a range of chatbots to. Microsoft has now apologized for the offensive turn its Tay chatbot took within hours of being unleashed on Twitter. That way, she could refuse to respond to certain words (like “Holocaust” or “genocide”), or respond with a canned comment like “I don’t know anything about that.” She also should have been prevented from repeating comments, which seems to have been what caused some of the trouble.īut people act horribly online all the time. Tay Tweets: Microsoft releases software allowing anyone to create AI chatbots, after great success of their own. As artificial-intelligence expert Azeem Azhar told Business Insider, Microsoft’s Technology and Research and Bing teams, who are behind Tay, should have put some filters on her from the start.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed